AI forgets

Stateful agents are the future.

Published Feb 27, 2025 · Around 2 minutes to read

When I’m solving a long problem with ChatGPT, it eventually forgets the original context. Fixed context windows allow LLMs to “see” only a certain amount of previous text, so they lose track of earlier details as the conversation gets longer.

But Claude Code does something interesting: it creates a checklist after you prompt it, and then completes the tasks one by one. Creating a checklist allows Claude to refer back to a summary of the original problem, working around the context window limitation. Plus checklists are friendly for the human as well, since they can see the LLM’s reasoning.

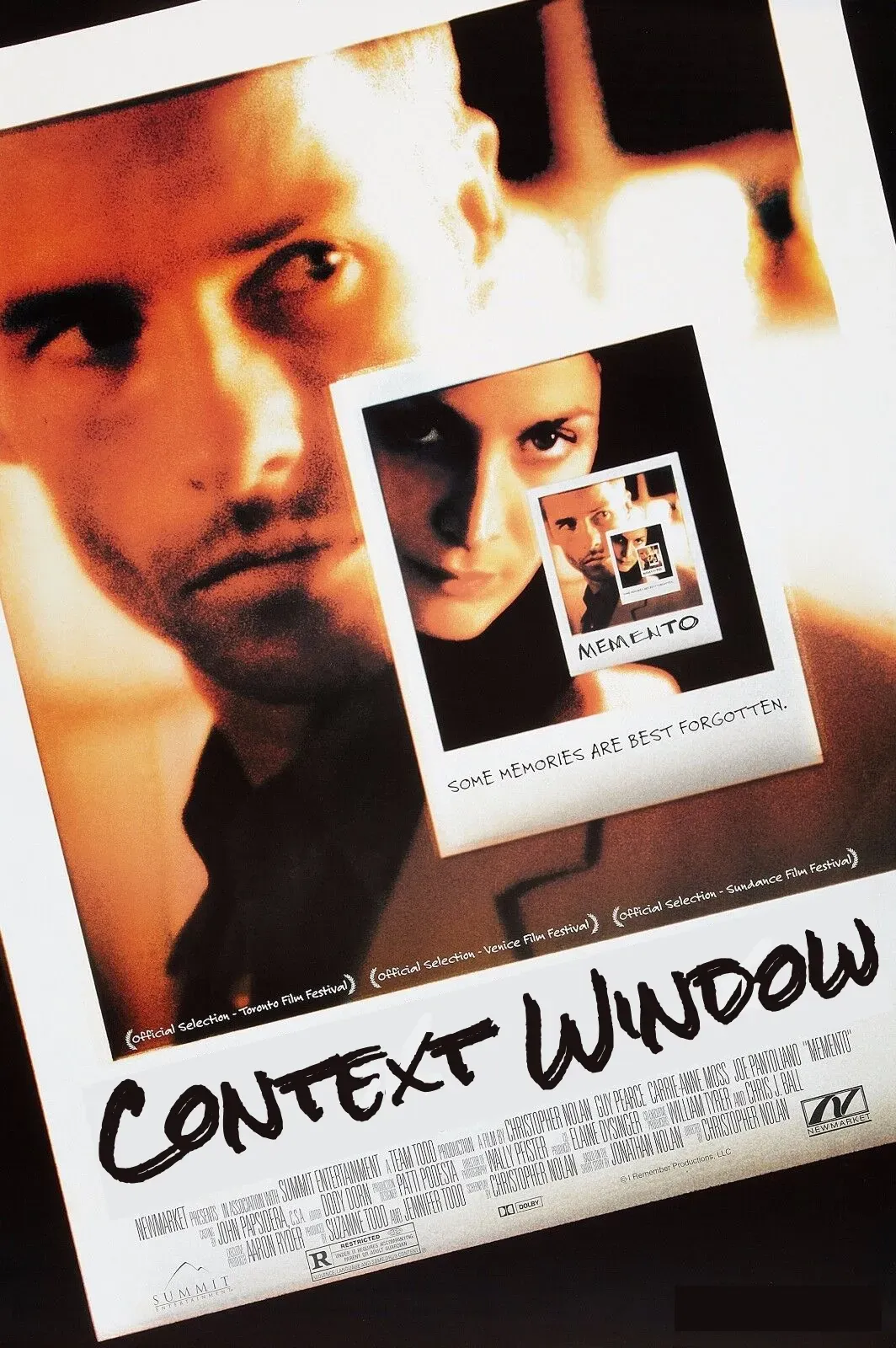

The checklist pattern reminded me of the movie Memento. Claude’s checklist is basically a set of polaroids that help it keep track of the problem.

Because LLMs forget.

Checklists are cool, but they are still stateless. What if you need to solve a problem from a year ago? How would an LLM remember a research project you worked with it on months ago? I came across Letta, which allows you to build agents with memory. These agents remember things, and build upon that knowledge, unlike vanilla LLM agents.

Atomic Research or a Second Brain would be really cool applications of this.

—

P.S. Thanks to Austin Vance for inspiring this post. He showed a neat demo of Claude Code. I forgot what he was building though.

READ NEXT